Next Generation Robotics Education

Learn about the DuckieSky curriculum under development by Dr. Tellex

Exploring the role of Robotics in Society

HCRI was started to explore the growing role robotics plays in our daily lives.

Public Lectures

Watch some of our public lectures and talks.

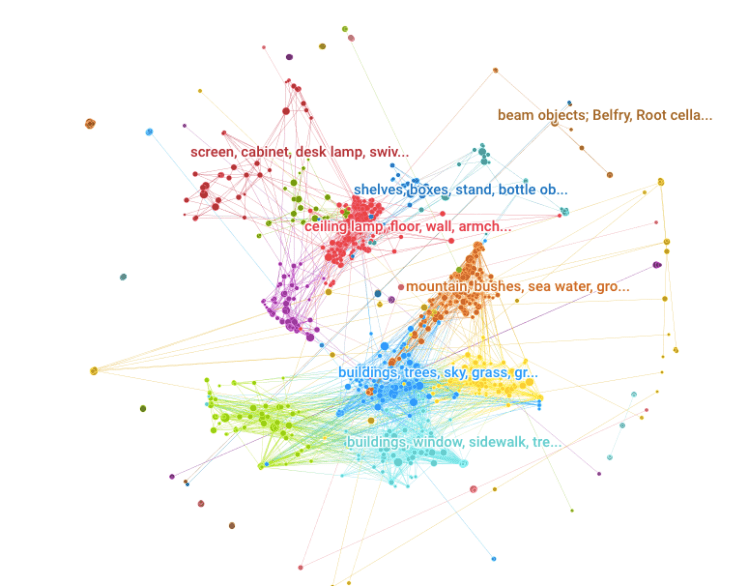

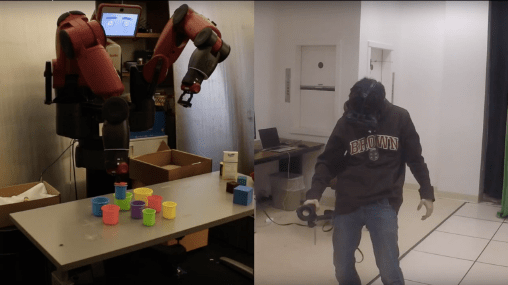

Cutting Edge Research

From moral norms in robots to Integrated AI our research covers cutting edge topics in robotics and artificial intelligence.

Raising Awareness in Conferences and Events

HCRI helps put on numerous talks, symposia, and events related to robotics and AI.

Helping Students

In our maker space students have crafted award winning robots. Learn More about them here:

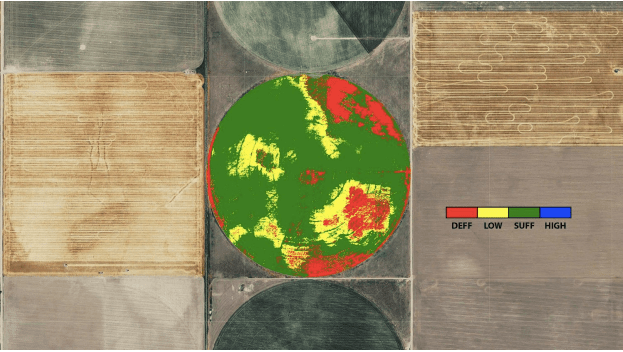

Cloud Agronomics

Cloud Agronomics, which just raised a 7 million dollar Series A round, started in the HCRI makerspace.

Walkerbot

Initially sponsored by Brown’s OVPR a student team took the walker bot to win $40,000 in the MRADI challenge.

TBO

TBO the tablebot was the first robot built in partnership by the walkerbot team.

Want to learn more?

Contact Us

Reach out for more information